Jed Quiaoit

Josh Argo

AP Statistics 📊

265 resourcesSee Units

"[You] may be surprised to learn that there is variability in slope. In [your probable] experience in previous courses, the slope of the line of best fit does not vary for a particular set of bivariate quantitative data. However, suppose that every student in a university physics course collects data on spring length for 10 different hanging masses and calculates the least-squares regression line for their sample data. The students’ slopes would likely vary as part of an approximately normal sampling distribution centered at the (true) slope of the population regression line relating spring length to hanging mass.

In this unit, [you'll] learn how to construct confidence intervals for and perform significance tests about the slope of a population regression line when appropriate conditions are met!" -- College Board, AP Statistics Course Description

Recap time!

In Unit 3, you got introduced to concepts related to linear regression: slope, y-intercept, R^2, standard deviation of the residual s, and standard error of the slope in context from computer output. We also placed huge emphasis on avoiding deterministic language (e.g., “a 1-foot increase in X is associated with a 0.445-point increase in Y”) in favor of framing the association in terms of potential outcomes (i.e., “a predicted 0.445-point increase”). Now, we'll apply what we've learned in the past couple units and connect slopes to inferential statistics! 😌

Recap Time: What is "Inference"?

Remember from Units 6-8, that inference is a huuuuge part of statistics. In fact, it is the most important and useful part of the AP Statistics course (and it's also tested very heavily). Inference is the act of using a sample to either make a prediction or test a claim about a population parameter. 🔮

In Unit 8, we looked at a more complicated way of doing inference for categorical data by using inference procedures for categorical data with multiple categories (data presented in a two way table). In this unit, we are going to look at a more complex inference procedure for quantitative data by looking at bivariate data instead of univariate data. Therefore, our data will be presented in a scatterplot.

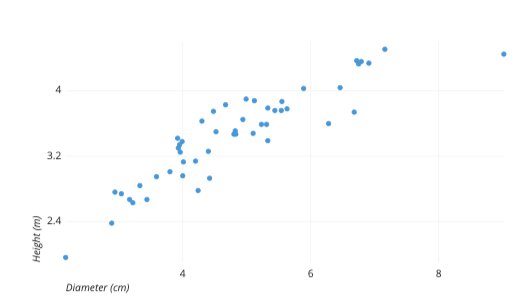

Scatterplots

A scatterplot is the most useful way to display bivariate quantitative data. One variable for each data point is displayed on the x-axis and one variable is displayed on the y-axis. As seen in the example above, this often allows us to see correlation or patterns in our data points. 📈

Explanatory Variable

The explanatory variable, or independent variable, is the variable that is typically found along the x axis. One way to remember this is that this variable is what does the “explaining” of the patterns we are generally seeing in the overall layout of the scatterplot.

Response Variable

The response variable, or dependent variable, is the variable that is typically found along the y axis. One way to remember this is that this variable “responds” to the other variable in building our pattern. Also, it “depends” on the other variable.

Example Variable

For example, let’s say we are investigating the correlation between shoe size and height. Ask yourself, “Does shoe size depend on height, or does height depend on shoe size?” While either of these make sense and would give a similar pattern on a scatterplot, it is more reasonable to say that someone’s shoe size typically depends on their height. Therefore, shoe size is the dependent variable and should be found on the y axis.

Inference with Scatterplots

Again, as you can recall from Unit 2, our linear regression models have several parts: a slope, y-intercept, r value, and R^2 value. While an r value and R^2 value do a good job at determining how correlated our points are along a scatterplot, they don’t quite give us the inference procedure with hypotheses and being able to say that there is evidence of correlation. 🕵️

This is where our t-interval for slopes and t-test for a slope come in to give us not just one value for a slope, but a range of possible values that we can be confident contains the true slope of our regression model rather than just one prediction.

T-Interval

As with all of the other units involving inference, the first form of inference procedure we are doing is constructing a confidence interval. A confidence interval is a form of inference that allows us to predict the true slope of our regression line. While our sample scatterplot gives us one slope, adding in one point can change this model greatly. So rather than honing in on just one slope, adding a margin of error to that slope gives us a range of values that we can be pretty certain contains the true slope of our linear regression model for all possible points. 🤺

T-Test

The other form of inference in scatterplots is a t-test. In this type of inference, we will be testing a null hypothesis that states that the slope between our two variables is 0 (ie, there is no relation). After running our test, we can determine if there is enough evidence that hypothesis needs rejecting in favor of another hypothesis (that the slope is not 0). This type of test closely correlates with our r value in that the stronger our r value, the more likely we are to reject our null hypothesis. 📝

Big Questions in This Unit

Some questions that you'll be able to answer at the end of unit 9. Here are some possible answers we came up and see if they makes sense (or you'd like to contest them) later on!

- 💡 How can there be variability in slope if the slope statistic is uniquely determined for a line of best fit?

The slope of a line of best fit is not uniquely determined, but rather it is estimated based on sample data. Therefore, it is possible for the estimated slope to vary from sample to sample. This is why it is appropriate to perform inference about the slope of a population regression line based on sample data, because the sample slope is only an estimate of the true population slope.

- 💡 When is it appropriate to perform inference about the slope of a population regression line based on sample data?

It's appropriate to perform inference about the slope of a population regression line based on sample data whenever you want to draw conclusions about the slope of the population regression line based on your sample data. This is typically done when you want to test whether there is a significant linear relationship between two variables in the population, or when you want to estimate the strength and direction of this relationship.

For example, you might collect data on the heights and weights of a sample of people, and then use this data to estimate the slope of the population regression line that describes the relationship between height and weight in the population. If you find that the slope of the sample regression line is significantly different from zero, you can conclude that there is a significant linear relationship between height and weight in the population.

Note that in order to perform statistical inference about the slope of a population regression line, you must assume that your sample is representative of the population and that the sample data follows a certain statistical model (e.g., the linear regression model).

- 💡 Why do we not conclude that there is no correlation between two variables based on the results of a statistical inference for slopes?

We don't conclude that there is no correlation between two variables based on the results of a statistical inference for slopes because the slope of a line of best fit only represents the strength and direction of the linear relationship between the two variables. It is possible for there to be a nonlinear relationship between two variables, or for there to be no relationship at all, even if the slope of the line of best fit is not significantly different from zero.

For example, consider the case where there is a strong quadratic relationship between two variables, but the slope of the line of best fit is not significantly different from zero. In this case, the slope of the line of best fit would not be a good indicator of the strength and direction of the relationship between the two variables, and you would not be able to conclude that there is no correlation between the two variables based on the results of a statistical inference for slopes!

Circling back to the big picture of this unit, it's important to consider the nature of the relationship between two variables and to use appropriate statistical methods to test for and quantify this relationship, rather than relying solely on the slope of a line of best fit.

🎥 Watch: AP Stats Unit 9 - Inference for Slopes

Browse Study Guides By Unit

👆Unit 1 – Exploring One-Variable Data

✌️Unit 2 – Exploring Two-Variable Data

🔎Unit 3 – Collecting Data

🎲Unit 4 – Probability, Random Variables, & Probability Distributions

📊Unit 5 – Sampling Distributions

⚖️Unit 6 – Proportions

😼Unit 7 – Means

✳️Unit 8 – Chi-Squares

📈Unit 9 – Slopes

✏️Frequently Asked Questions

📚Study Tools

🤔Exam Skills

Fiveable

Resources

© 2025 Fiveable Inc. All rights reserved.